| To Mine Or Not To Mine - Dec 9 | ||

Most of the world (myself included) recently 'woke up' to all things Cryptocurrency related. Most of the world (myself included) recently 'woke up' to all things Cryptocurrency related.Once word of the meteoric rise of Bitcoin got out to masses everyone naturally started wondering how they could cash in on what is either the beginning of a fundamental technological and societal change or just the latest bubble waiting to pop. I'll leave that discussion to others, but it did make me curious about mining - the process of using your computer to create new coins. I won't go into all the technical details, but Bitcoin and other so called alt-coins use a technology called Blockchain to verify the electronic transactions used when transferring money. So what is mining? From another source: "A mathematical proof of work, created by trying billions of calculations per second, is required to confirm a Bitcoin transaction." So when people use their computers to mine, they are contributing to a vast global network of computing power to process these transactions. There seems to be two kinds of systems used for mining. Purpose made computers running customized circuits or ASICs and generic computers using multiple graphics cards or GPUs. People going the generic route will typically build open air systems consisting of 6-7 GPUs. Why the focus on GPUs? Although used to provide a computer's display, depending on the application they are able to crunch numbers much better than the computer's processor or CPU. Building a rig is obviously not cheap. But hey, I already have a decent computer with a kick ass video card. What about simply using it?

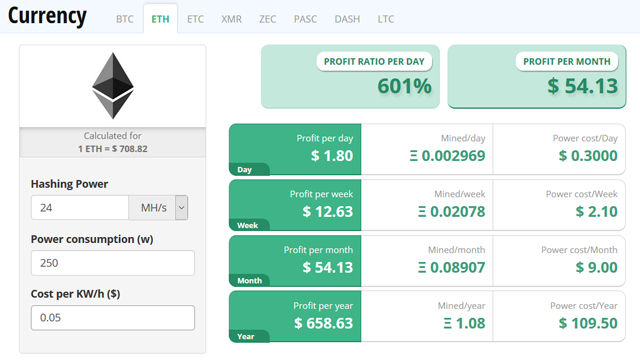

While Bitcoin is front and center in the media currently, I'm more interested in Ethereum. Although its price isn't even in the same ballpark I think the technology behind it is superior and as it has the backing of industry heavyweights such as Intel and Microsoft I think more likely to succeed long term. So I went online and looked up the required input values which I plugged into this calculator. The results clearly show that it is not worth it to use my existing gaming PC to mine Ether. Guess I won't be getting rich anytime soon. Now I did some research and apparently my video card - a Nvidia GTX 1080 - is known to not be the best option for mining. It might be awesome for gaming, but not for this application. So as a comparison I looked up the values for AMD's latest and greatest and sadly the results were similar. Bottom line, you will not make money mining on a typical gaming PC. It's really all about scalability which is why serious miners will have racks upon racks of rigs consisting of 6-7 GPUs all churning away 24x7. So unless your willing to make a serious hardware commitment you're much better off just buying the currency directly. |

| I Need A Bridge - Nov 18 | ||

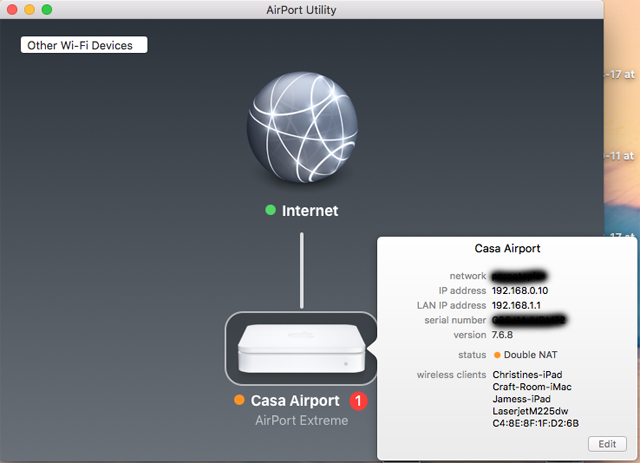

Last week I woke up and did my usual routine - pour myself a cup of coffee, head into the office, plunk down and login to my iMac to check Facebook, check my email, and generally see what's happening in the world. Last week I woke up and did my usual routine - pour myself a cup of coffee, head into the office, plunk down and login to my iMac to check Facebook, check my email, and generally see what's happening in the world.However I was annoyed to find that our Internet connection was down. In such cases I usually head downstairs and power cycle either the Apple Airport router or the cable modem itself. So I did that, went backup upstairs to check - and still no Internet. Ok, let's check Shaw's website to see if there are any reported outages in my neighborhood. I grab my iPhone, switch off WiFi, browse to Shaw's website and it mentions that they were doing maintenance during the night which was supposed to end at 6am. It was 8am, so obviously they weren't done. No problem, I'll finish my coffee and wait a few hours and hopefully it'll come back on it's own. Later in the afternoon we're still down so we check with the neighbor and find that their Internet is working just fine. Now somewhat annoyed I use my phone to go to Shaw's support chat and talk with a technician. They couldn't contact my cable modem and weren't sure why it was down. The earliest they could send someone out to look at it was three days later. I had to go out of town anyways, so not a big deal for me, but it sucked for the wife who was relegated to spending time at the local coffee shop to use their WiFi. Finally the day arrives and the tech shows up. We were using a (relatively) ancient Motorola cable modem which the technician replaced with a Cisco DPC3825 model. These are full blown routers with built in Firewall, WiFi etc. I told the tech that I wanted all that turned off as I already have my Apple router which is already configured for everything. The tech assured me that he would do just that. After it was swapped out he had me test things and we had Internet once again. However I noticed that the Airport was complaining about being Double NAT'd. The tech said I just needed to power cycle it and everything would be fine.

At that point things seemed to be working ok so I didn't think much more about it. But the next day I noticed our IP cameras weren't working (which do port forwarding). I went back into the Airport Utility and noticed that the router's address had changed. After doing some more digging I realized that the new Cisco modem had taken over as a DHCP server. So I did some Googling and found out this is a common problem. Basically I was now running two routers - which is what the Airport was complaining about by saying it was 'Double NAT'd'. The solution was to enable 'Bridge mode' on the Cisco model which turns it back into a dumb device. So I browsed to the admin page for the Cisco, figured out the default password and logged in. I was annoyed to see that WiFi on it was also enabled so I turned that off. But I couldn't find the option to enable Bridge Mode. Back to Shaw support I went and after roughly 30 minutes they sent the command to the device to switch it. After it reset, the Apple router re-established contact and reverted back to it's previous address and the status light went Green. Going through the forums online this apparently was a big problem when this new class of modems came out originally and at that time the support people at the various ISP's didn't have a clue what 'Bridge mode' was and there was much frustration all around. I guess this is one of those times where being a bit behind the technology curve was a blessing. |

| Whither Security? - Oct 14 | ||

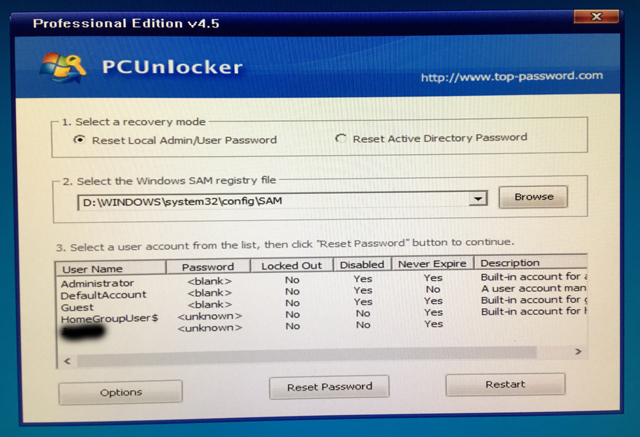

I recently was tasked at work with 'hacking into' a server from a company we just acquired where we owned the hardware but weren't given any passwords or credentials. I recently was tasked at work with 'hacking into' a server from a company we just acquired where we owned the hardware but weren't given any passwords or credentials.My first reaction was that this was going to be an ordeal if not outright impossible. At the least I was expecting that I'd have to resort to some cracking program chugging away for hours if not days to crack the built-in Administrator password. Once I had that I'd be able to log into the system and do anything I wanted to at that point. I did some Googling and found an article which describes one way to do this. But what if the last logged on user wasn't an Admin account? You might be able to log in, but you still wouldn't 'own' the system. A colleague mentioned a 3rd party tool called PCUnlocker which I downloaded and tried out on a test server (I successfully tested the program on both Windows 2012R2 Server and Windows 10).

The nice thing about this program is that it'll list all the local accounts so you can easily tell which account is a local Administrator. At this point I was still somewhat skeptical that it'd be successful but I tried it and sure enough it worked like a charm. I was able to reset the Admin password and then log on using the new password. I was stunned that anyone could so easily break into a server despite all of Microsoft's touted security features. Doing some more Googling I discovered that this ability has been an 'open secret' for years. Of course some would argue that it's not a security hole because you still need physical access to the system - but that's small consolation if you ever lose a laptop or have a break-in. You would think at the very least Microsoft would encrypt the SAM database by default so that these programs wouldn't be able to list the accounts and you would have to know the name of the Admin account in order to reset it. So how to prevent someone from being able to so easily break into your computer? You simply need to encrypt your System drive with BitLocker, or some 3rd party encryption solution. If BitLocker, that's assuming your system has the built-in TPM security chip that it requires. |

| CPU Temperature Error - Jul 23 |

My old system that's currently running Linux has had an issue for as long as I can remember. Whenever you'd boot it up it'd complain that the CPU temperature was too hot. It was always pegged at 105 degrees Fahrenheit. I'd press a key and it'd continue on and as far as I could tell was operating just fine. So eventually I just went into the Bios and told it to suppress that error message. But after having put it in the new Lian-Li case and having easy access to the motherboard I decided I'd finally get to the bottom of this error and resolve it once and for all. My old system that's currently running Linux has had an issue for as long as I can remember. Whenever you'd boot it up it'd complain that the CPU temperature was too hot. It was always pegged at 105 degrees Fahrenheit. I'd press a key and it'd continue on and as far as I could tell was operating just fine. So eventually I just went into the Bios and told it to suppress that error message. But after having put it in the new Lian-Li case and having easy access to the motherboard I decided I'd finally get to the bottom of this error and resolve it once and for all.I did some Googling and the consensus seemed to be that the thermal paste was likely old and no longer working very well and that by removing it and re-applying new paste it should solve the over-temperature error. So I ran down to the local computer store and got some Arctic Silver, removed my Zalman CPU cooler, removed the old paste, applied the new paste, reseated the cooler and powered it back up - only to find the error was still there. So then I'm thinking well maybe there's an issue with my cooler, maybe over time the bracket had warped and as a result the cooler was no longer making proper contact with the CPU. So to rule that out I bought a cheap Intel type cooler off Amazon and waited a few days for it to arrive. Once it was here I repeated the process with the paste and powered it on - only to find the error was still there. Well crap. Ok, maybe the CPU itself was the issue. So I hopped on eBay and found someone selling a Core 2 Duo @3.33Ghz for cheap. It was only slightly faster than what I had, but this new(er) chip also had more cache so I ordered it. Waited a week for it to arrive (actually it took longer than it was supposed to and I contacted the seller who refunded me the money so I got it for free) and swapped out the old chip, applied the paste, applied the cooler and powered it on - only to find the error was STILL there! At this point the only thing left to replace was the motherboard. So once again I hopped on eBay and found someone selling the same ancient motherboard - an Asus P5K-VM - which came out roughly a decade ago. Waited a week for it to show up and swapped in all the components including additional memory I had also bought while I was searching eBay. Put in my new CPU, applied the paste, attached my CPU cooler, fired it up - and the error was gone! The nice thing about mucking around with a system as old as this one, assuming you can still find parts, is that it's relatively cheap to do what I did and replace the board and processor. In the end I spent less than $100 and got the fastest Core 2 Duo chip ever made in the process. Now the error is gone and I don't have that nagging worry in the back of my head that I was slowly killing my system. The moral is that should you get a similar temperature error, yes, the problem likely is resolved by applying new paste, but should that fail it's likely that a failed temperature sensor on your motherboard is to blame. |

| VMWare Issues - Jul 8 | ||||

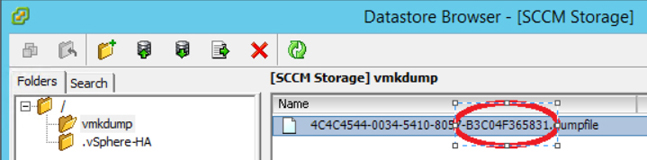

I was working in the vSphere console recently and was doing some cleanup. I was working in the vSphere console recently and was doing some cleanup.There was a VM that had been powered off for awhile and was no longer needed. After deleting the VM from disk I went to delete its associated datastore but was presented with this error message: The resource 'Datastore Name: <name> VMFS uuid: <ID>' is in use. Cannot remove datastore 'Datastore Name: <name> VMFS uuid: <ID>' because the file system is busy. Correct the problem and retry the operation. Hmmm. Now what? After doing a bunch of Googling I came across the solution. I browsed the datastore and sure enough there was a coredump file in there. It had to be removed somehow.

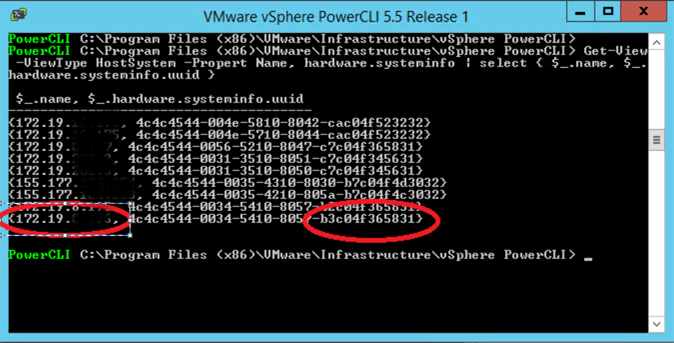

But I first had to determine which host had the lock on it. Opening up PowerCLI I typed the following command: Get-View -ViewType HostSystem -Propert Name, hardware.systeminfo | select { $_.name, $_.hardware.systeminfo.uuid }

This listed all of the hosts and their UUID's which I was able to use to match the last part of the dumpfile's UUID with the correct host. I then SSH'd into the host and ran this command to remove the file: esxcli system coredump file remove --force Once that was done I was then able to go and delete the datastore, detach it from the storage adapter, and then take the corresponding volume on the SAN offline and finally delete it to recover the space. So if in the future you get this error message when trying to delete a datastore, check to see if there's a coredump file sitting in there. |

| SCCM Fun - Jun 11 |

Got back from holidays to find our SCCM server was acting up. Got back from holidays to find our SCCM server was acting up.More specifically, WSUS wasn't synching the latest patches from Microsoft. Great, just what I want to deal with on my first day back to work. I took a peek in the SCCM logs and this error kept showing up over and over again: Message ID: 6703 So I did a bunch of Googling on this error and unlike what usually happens where I end up spending hours looking at outdated or simply incorrect solutions, I found the answer fairly quickly. As per the article I checked the Application Pools in IIS Manager and sure enough the WsusPool was stopped. So I increased the Private Memory Limit for it from the default of 1842300KB to the recommended 4000000KB (4GB) and restarted to pool. I waited a few minutes and on the next scheduled synchronization attempt I could see that it was properly synching. A rare troubleshooting session where I was able to find the answer and resolve the problem fairly quickly. |

| Fun With Cases - Jun 4 | ||

Although I have my fancy iMac and new gaming system, I still occasionally fire up my old PC which is currently running Linux Mint. Although I have my fancy iMac and new gaming system, I still occasionally fire up my old PC which is currently running Linux Mint.It's been housed for the longest time in a shiny small form factor (SFF) case. Recently I decided to remove it from there and instead install it on a Lian Li test bench case that I bought years ago. I can't even remember why I bought it originally although I'm guessing at the time I was planning on buying a bunch of peripherals and upgrades and testing them. The nice thing about this case is that it's completely open and you can easily access all the components. Usually with a traditional tower rig it's somewhat of a hassle as you end up having to crawl under the desk and unhook everything and then lug it up and onto the top of the desk to access inside. Or even worse is a SFF case where everything is so tightly crammed in there you end up having to take most of it apart just to access one peripheral. As is typical with most of these cases instructions are fairly minimal with a few illustrations thrown in. It took me awhile and mostly through trial and error I was able to figure out what went where and how it was all supposed to go together.

There's an optional bracket I affixed at the back which helps prevent it from tipping over and space at the front for an optional interface board which did not come with the product. Other than that there's not much to it. The handle is a nice feature which makes it easy to move around and the aluminum construction is lightweight. About the only negative is I find the PCI slot brackets rattle whenever the DVD drive spins up. But as I only really use it when I install a new OS it's not a big deal. As you can see from the picture it's not your typical case and I dig it's industrial look. Upon bootup my system has forever complained about a CPU temperature error and I've been meaning to remove the CPU and re-apply new thermal compound to fix. Now that the motherboard is installed in this new setup it should be a much easier process than before. |

| Exchange Mystery - Apr 25 | ||

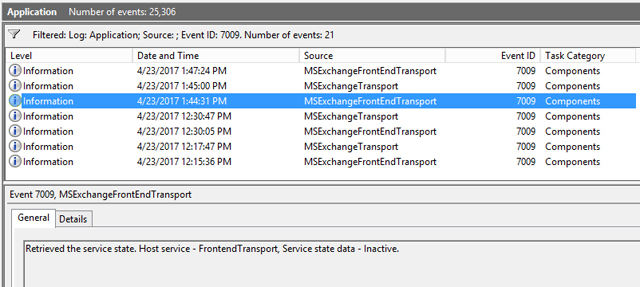

Had a bit of a scare over the weekend. Had a bit of a scare over the weekend.I was applying the latest cumulative updates to our Exchange 2010 servers and attempting to apply the latest cumulative update to our Exchange 2013 Hybrid server. The 2010 updates installed just fine, but when I went to install the 2013 one it popped up a Dr. Watson error. At this point, not having the time to troubleshoot I simply reverted back to the VM snapshot I had created previously. Not long after it came back up I noticed incoming email from the Internet was no longer working. We have our mail smarthost configured to forward Internet email through the 2013 server. As I had reverted to the snapshot which was working fine previously I initially didn't suspect the Hybrid server was the problem, instead focusing on the 2010 updates and possibly last week's security updates. Checking the mail queue on the smarthost showed '421 4.3.2 Service not active (in reply to MAIL FROM command)' errors. So I then tried sending a test email via Telnet which failed. Ok, so I knew it must be the Hybrid server that was the issue, but I didn't notice any errors in the Event Log and I double checked that all the services were running. After searching through article after article on Google I finally stumbled across the problem. As Exchange 2013 is fairly new to me I'm still learning all of it's nuances, but apparently services can be running but not actually working. It's called 'maintenance mode' and will make the various services inactive while doing updates.

Two ways to verify if that's what going on is through the Event Log - event ID 7009, note that it shows the state as being Inactive - or through the Exchange Management Shell: Get-ServerComponentState In my case every single component was Inactive. Note that simply restarting does NOT fix the problem. You have to manually intervene and make the components active again. I then ran into my second issue as all the articles I found on this stated to simply run the Set-ServerComponentState command and set the ServerWideOffline component to Active. But it wasn't working for me. Finally I found the one article which solved the problem. Usually when in maintenance mode everything is inactive except for the Monitoring and RecoveryActionEnabled components. But in my case they were inactive as well. If those two components aren't active using ServerWideOffline will not bring everything back. You have to make all three components active. Once I did this and restarted both the FrontEndTransport and Transport services email flow was restored. I still don't know why I ended up in this state, but at least now I know how to look for it and fix it. |

| iCloud Apocalypse??? - Mar 29 | ||

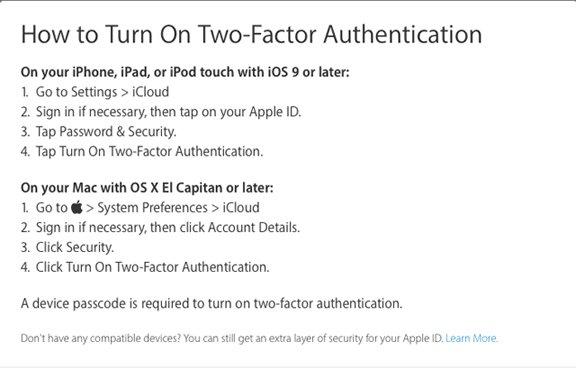

Recently news broke that a group of hackers had supposedly compromised up to 300 million iCloud accounts and were threatening to remotely wipe any devices linked to those accounts unless Apple pays a ransom by April 7th. Recently news broke that a group of hackers had supposedly compromised up to 300 million iCloud accounts and were threatening to remotely wipe any devices linked to those accounts unless Apple pays a ransom by April 7th.Most credible sources are disputing the likelihood of them being able to carry out that threat, suggesting that they might have gotten a hold of a few accounts at most. Whether or not the hack and the potential scope is indeed real or not, it serves as a timely reminder that it's never a bad idea to improve your security when you can. Here are some best practices for securing your iCloud account and iDevices:

I guess we'll know if it's doom and gloom or a lot of fuss over nothing in a week or so. Still, following these few easy steps will go a long way to ensuring you data and your accounts are protected as much as possible. |

| Safari Weirdness - Mar 11 | ||||||

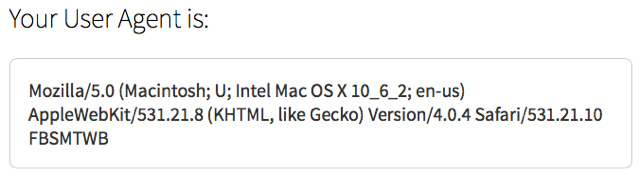

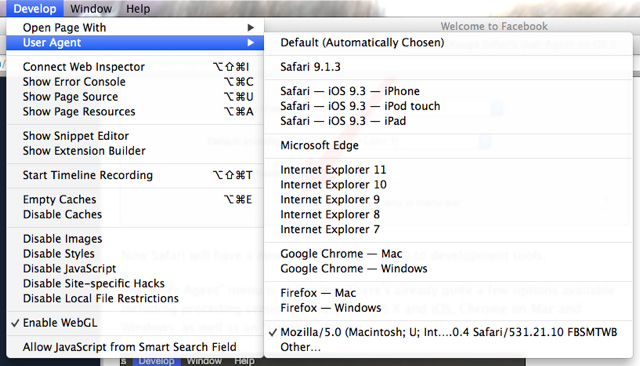

A couple days ago I was surfing the web on my old (but much loved) iMac which is running OS X Lion. I went to log into Facebook and was somewhat confused that it was showing the mobile website instead of the normal desktop website. I didn't think much of it at the time, but a few days later my wife was on our new iMac which is running OS X Mavericks and says 'why does my Facebook look all weird'? Sure enough it also was showing the mobile version. But the odd thing was when I logged on with my account it showed normally. Same system, showing the mobile site for one account and the desktop site for another. A couple days ago I was surfing the web on my old (but much loved) iMac which is running OS X Lion. I went to log into Facebook and was somewhat confused that it was showing the mobile website instead of the normal desktop website. I didn't think much of it at the time, but a few days later my wife was on our new iMac which is running OS X Mavericks and says 'why does my Facebook look all weird'? Sure enough it also was showing the mobile version. But the odd thing was when I logged on with my account it showed normally. Same system, showing the mobile site for one account and the desktop site for another.At this point my curiosity was peaked and I had to figure it out because it was bugging the heck out of me. My guess is that Facebook just arbitrarily cut off support for older browser versions and on systems that it detected as not meeting the requirements would show the mobile site - which in theory would work better on an older browser (only problem is it's ugly as hell). But my new iMac is fairly modern and again, it worked fine under my account. So what was going on? I logged on with the wife's account and went to install Firefox to try it on a different browser. But when I got to the Firefox download page it said 'Your system doesn't meet the requirements to run Firefox'. What the heck? I logged on with my account and went to the Firefox download page and it didn't display that message. So I took to Google and did a bunch of searches and after awhile stumbled across a link that pointed me in the right direction. Whenever you go to a website your browser will 'broadcast' a string identifying a bunch of info, including the OS you're running and the version of Browser you're using. One of the articles pointed me to a website which will display that info for you. While logged on with the wife's account this is what it showed:

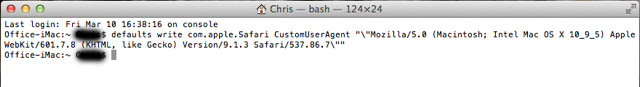

It was saying that my Mac was running Snow Leopard which was a Mac OS from 8 years ago!! I went to the same website while logged on with my account and it showed the correct information. So obviously there was something weird with the wife's account going on. I did some more digging and found that by going into Safari's preferences, Advanced tab, you can tell it to show the Developer menu. Once that's showing you can click on it and it will allow you to change the information - the user agent string - that is sent to websites you visit. But instead of Default being selected it was showing a custom user agent string instead. When I changed it to Default and went to Facebook everything showed correctly - so I knew this was definitely the issue. Unfortunately if I exited Safari it would again default back to that custom string.

I did some more digging and found out that you can overwrite the custom user agent string. So I simply copied the string that was showing when logged on with my account into the following command in Terminal: defaults write com.apple.Safari CustomUserAgent "\"<paste string here>\""

Once that was done I opened up Safari, checked the developer menu and sure enough it was now showing the updated string. Opened Facebook and it displayed properly. At this point I was feeling pretty happy with myself that I got the problem sorted. But then I thought about it some more and still was annoyed that it kept defaulting to this custom string instead of the 'Default (Automatically Chosen)' option. After some more digging I came across the command to delete the custom string: defaults delete com.apple.Safari CustomUserAgent Once that was done I re-opened Safari and now the default option was chosen. So now everything is back to normal. What happened to cause this issue in the first place? Who knows. I didn't make any changes and she's claiming innocence. But at least now she's happy that she can go back to watching adorable cat videos on Facebook and not have it showing the horrific mobile site. |

| Deployment Fun - Feb 17 | ||||

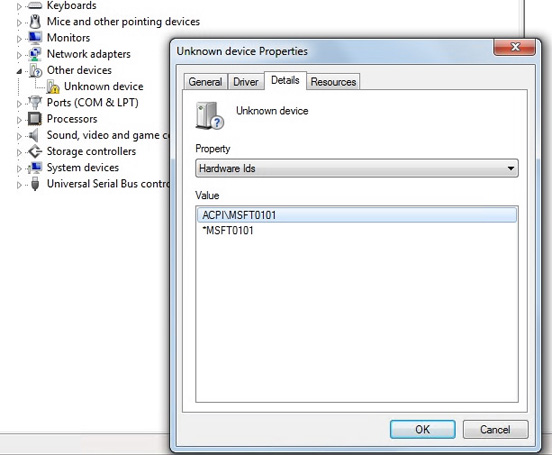

Recently I was given a new laptop model - a Dell Latitude E5470 - to add to our enterprise OS image. Recently I was given a new laptop model - a Dell Latitude E5470 - to add to our enterprise OS image.While we have plans to eventually use SCCM for OS deployment, upgrades, wiping new systems and putting our corporate image on them, for now we're still using the basic Microsoft Deployment Toolkit (MDT). Typically when I get a new model I'll install the OS from DVD and when finished take a peek in Device Manager to see what drivers are missing. Then I'll download them from the vendor, inject them into MDT's list of drivers, image the system, and verify everything gets installed properly. But for some reason, there was one entry in Device Manager that I could not figure out what driver was required:

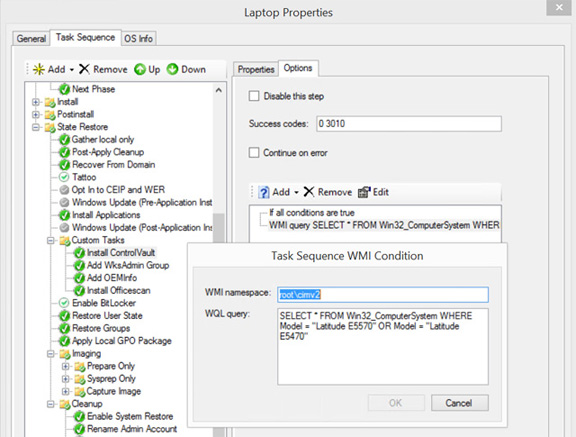

After some furious Googling I came across a post from someone having the same issue. While these newer desktop and laptops still have Windows 7 drivers, it's becoming more of a pain trying to shoehorn such an old OS onto them. In this case the laptop's Bios supports TPM 2.0 - which is the latest version of the cryptography platform for securing and encrypting these systems. But Windows 7 has no idea about this newer version. So the solution is a hotfix from Microsoft. After importing the hotfix into MDT as a package the question mark in device manager is now gone after the image is completed. The other issue I ran into is this laptop has a fingerprint reader that you can use to unlock it and log in to Windows. Pretty cool stuff, but again, a pain to implement with MDT. First problem is even after running Dell's ControlVault drivers package and going back into Device Manager and tracking down the driver names and locating them, the cab file, the inf file, the sys file etc. and importing them into MDT - it still doesn't properly install the drivers. You have to run the executable. Which is fine when doing manually, but a problem when trying to automate it. So what I ended up doing was to create an application for it and then add it to the Task Sequence. But the other problem was how to prevent it from applying to models that don't have a fingerprint scanner. While MDT supports installing a database and using it to record the models of the various systems, that's something I never implemented. So my work around was to add a WMI query to the Task Sequence instead:

As we have two laptop models with the fingerprint scanner I also added the second model to the query. Now the correct software gets installed on systems that require it! |

| 3D TV Is Dead - Jan 29 | ||

With recent announcements by industry heavyweights LG and Sony that they will no longer be making 3D capable TV's, the days of 3D TV is over. Always a niche market, 3D really burst onto the scene after the success of Avatar. Suddenly manufacturers saw a potential new way to market their products to the public. But despite their best efforts, it never seemed to end up being more than gimmick. Maybe it was due to lack of content, maybe it was due to the hassle of needing the trifecta of required equipment (TV, Player, Disc), maybe it was due to the dearth of movies filmed with 3D cameras, but whatever the reason it's now consigned to the technological dustbin of history. For me personally, I'm somewhat disappointed. While they only comprise a small portion of my Blu-ray library, I have from time to time enjoyed putting on my glasses and watching one of my 3D releases. Sadly my current setup is the last of several eras. In addition to being 3D capable, my Panny ZT60 is one of the last Plasmas made and my Pioneer BDP-85FD is one of the last Blu-ray players not requiring online activation - which is a whole other annoyance and topic for discussion.

Adding insult to injury, we'll still be forced to see movies at the theatre in 3D, because it's a cash cow for studios. You might have noticed that it's almost impossible to see a movie in 2D anymore, and if you can there might be one showing during the most useless time slot. They get to charge you more even though in any given year you can literally count on one hand the number of movies that were actually filmed in 3D. In almost every case the movie you're watching and paying more for was turned into a 3D movie during post production (they cheated). Now I think I'll go spin up my 3D copy of Hugo and ponder the end of an era. |